Building Agentic AI Applications with a Problem-First Approach: A Practical 2026 Playbook

Agentic AI means AI systems that can Think, plan, decide and act on their own, which is a key feature of agentic applications. Aritificail Intelligence Systems utilize AI tools effectively to reach a goal. Unlike simple automation, agentic AI does not just follow rules. It observes a situation, chooses the next step, uses tools, and keeps going until the goal is achieved.

Many people make one big mistake when building agentic AI and that is they do not addressing a specific problem effectively.

They start with the tool instead of the problem.

This is called a solution-first approach. Teams pick a large language model, an AI agent framework or a fancy automation tool and then try to force it to solve something. The result is often:

- AI agents that look smart but do not deliver real value

- Systems that break easily or behave unpredictably are often a result of neglecting the problem first approach.

- High costs with little business impact

- AI projects that never move past demos

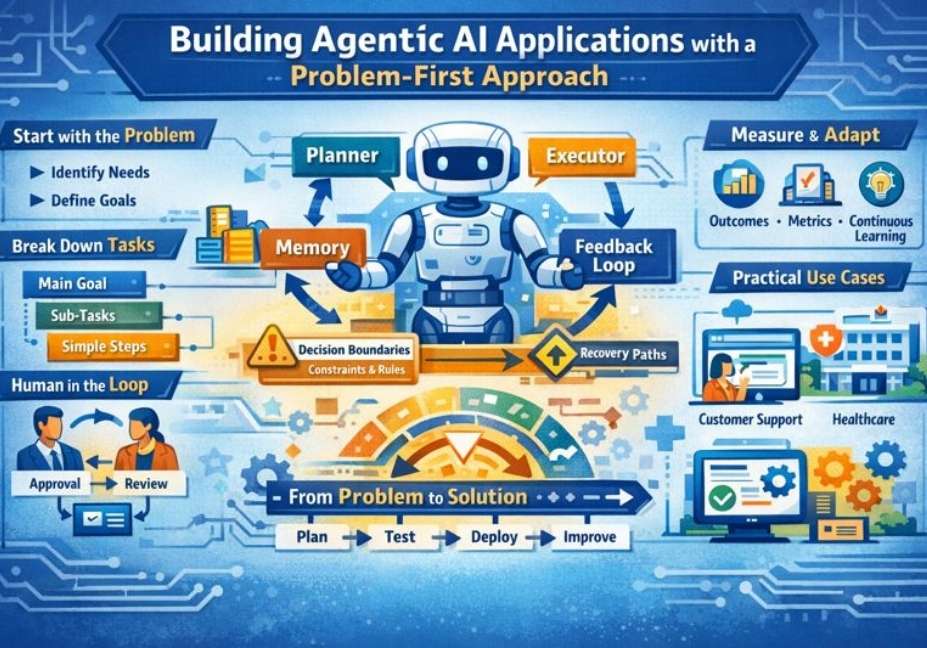

A problem-first approach flips this completely. You begin by clearly understanding the real problem, the desired outcome, and the limits of the system. Only then do you design the agent, choose the model, and define how the AI should act.

In this guide, you will learn:

- What makes agentic AI different from traditional AI automation

- Why problem-first thinking leads to safer, smarter AI agents

- How to identify problems that are worth solving with agentic AI

- How to design, build, and evaluate agentic AI applications step by step

- By the end, you will understand how to start building enterprise use cases for agentic AI. agentic AI systems that solve real problems, stay aligned with human goals, and create practical value in the real world.

Understanding Agentic AI Systems

Before building anything, it is important to clearly understand the requirements for agentic applications. What agentic AI really is can be understood through its applications in business problems. and how it is different from the AI systems we have used for years. This clarity helps avoid confusion, wasted effort, and failed AI projects.

What Is Agentic AI?

Agentic AI is a type of artificial intelligence that can set goals, plan actions, make decisions and take steps on its own to achieve a desired outcome.

In simple words, agentic AI does not just answer questions or follow fixed rules. It:

- Understands a goal

- Breaks that goal into smaller tasks

- Chooses what to do next

- Uses tools, data, or APIs

- Learns from results and adjusts its actions

According to the agentic ai news, An agentic AI system often includes:

- A large language model (LLM) Gen AI, like GPT, is revolutionizing how we interact with technology.

- A planner to decide next steps

- Memory To remember past actions, agentic applications must integrate mechanisms for learning and adaptation.

- Tools for building agentic AI applications are essential for effective problem-solving. such as databases, search, or software systems

- A feedback loop To improve decisions, it is crucial to apply AI tools strategically and effectively.

This is what makes agentic AI feel more like a digital worker than a simple chatbot.

Agentic AI vs Traditional AI Automation

Traditional AI automation is mostly reactive. Agentic AI is proactive.

Traditional AI automation:

- Waits for an input

- Follows predefined rules or scripts

- Produces a single output

- Stops after completing one task

Example:

A chatbot answers a customer question and ends the conversation.

Agentic AI systems:

- Start with a goal

- Decide what actions are needed for the successful implementation of data engineering in AI initiatives.

- Act without being told every step

- Continue until the goal is reached

Example:

An AI agent handles a customer issue by checking order history, creating a support ticket, emailing the user, and following up automatically.

The key difference is decision-making autonomy. Agentic AI, like a maven, can decide how to reach a goal, not just what To respond effectively, agentic applications must leverage generative AI technologies.

The Evolution of Agentic Artificial Intelligence

Agentic AI did not appear overnight. It evolved step by step.

First stage: Rule-based systems

- Hard-coded logic is often a limitation when designing agentic AI applications.

- “If this happens, do that”

- No learning or flexibility

Second stage: Machine learning models

- Learned from data

- Better predictions and classifications

- Still limited to specific tasks

Third stage: Large language models

- Understand language and context

- Generate human-like responses

- Still mostly reactive

Current stage: Goal-driven agentic AI developed through prompt engineering.

- Combines LLMs with planning and tools to build agentic applications.

- Works across multiple steps

- Adapts to new situations

- Acts independently within defined limits, particularly in the realm of generative AI applications.

This evolution is why agentic AI is now being used in customer support, healthcare operations, finance, software development, and business automation.

Understanding this shift helps you see why a problem-first approach is essential. When AI becomes more autonomous, clear goals and well-defined problems are no longer optional—they are critical.

What Makes AI Truly “Agentic”?

Not every AI system that looks smart is truly agentic. Many tools claim to be “AI agents,” but real agentic AI has a few core qualities that clearly set it apart. Understanding these qualities helps you avoid fake agents and build systems that actually work.

Autonomy, Goals, and Decision Loops

At the heart of agentic AI is autonomy.

This means the AI can:

- Work toward a goal, not just respond to prompts

- Decide what to do next without constant human input

- Repeat actions until the goal is reached

An agentic AI system operates in a decision loop:

- Observe the current situation

- Decide the best next action

- Act using a tool or response

- Check the result

- Adjust and continue

This loop allows the AI to handle system design effectively. multi-step tasksThe system must be able to recover from small failures and adapt to changing conditions through observability. Without this loop, an AI system is just a one-time responder—not an agent.

Planning, Memory, and Tool Usage

True agentic AI combines intelligence with structure.

Planning

- Breaks big goals into smaller steps, particularly when creating multi-agent AI applications.

- Chooses the correct order of actions

- Avoids unnecessary or harmful steps

Memory

- Remembers past actions and decisions

- Keeps track of user preferences or task history

- Prevents repeating the same mistakes

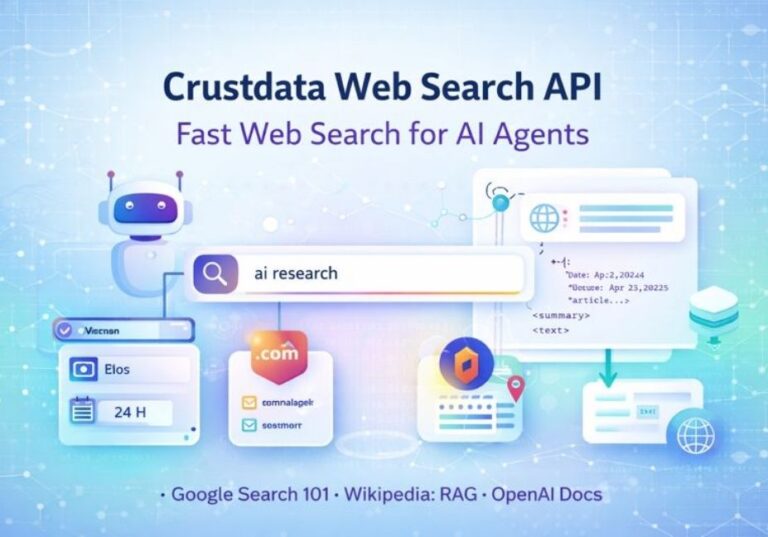

Tool usage is critical in the development of applications with a problem-first approach.

- Calls APIs

- Searches databases

- Reads or writes files

- Interacts with software systems

For example, an agentic AI handling invoices may:

- Read incoming emails

- Extract data from attachments

- Check records in a database

- Flag issues and notify humans

This ability to plan, remember, and use tools is what turns AI from a talking system into a working system.

Human Oversight vs Full Autonomy

One of the biggest fears with agentic AI is losing control. That is a real concern—and a valid one.

Fully autonomous AI can:

- Act quickly

- Scale easily by focusing on building robust systems that leverage agentic AI.

- Reduce human workload

But it can also:

- Make wrong decisions confidently

- Drift away from business goals when using autonomous agents without proper guidance.

- Create ethical or legal risks

That is why most successful agentic AI applications use Human-in-the-loop oversight is crucial for ensuring the effectiveness of impactful agentic AI systems.. Humans:

- Approve critical actions

- Review high-risk decisions

- Set clear boundaries and rules

The goal is not to remove humans. The goal is to let AI handle routine work while humans stay in control of important outcomes.

True agentic AI is not about replacing people—it is about working with them in a smarter, safer way.

The Problem-First Methodology for Agentic AI Development

Building agentic AI without a clear problem is like starting a journey without knowing the destination. You may move fast, but you will not reach anything meaningful without leveraging AI. A problem-first methodology keeps your agentic AI focused, useful, and safe.

Why Starting With the Problem Matters

Many AI projects fail not because the technology is bad, but because the problem was never clearly defined.

When teams skip problem definition:

- AI agents solve the wrong task

- Outputs look impressive but do not help users

- Systems become complex and hard to control

- Time and money are wasted

Agentic AI is powerful. It can plan, decide, and act. But power without direction leads to chaos. By starting with the problem, you:

- Set clear limits for AI behavior

- Reduce unexpected actions

- Build trust with users and stakeholders

A well-defined problem gives the AI agent a clear purpose, not just instructions.

Defining the Desired Outcome First

A strong problem-first approach focuses on outcomes, not features.

Instead of asking:

- “What can this AI do?”

Ask:

- “What should change after the AI acts?”

A good desired outcome is:

- Specific

- Measurable

- Useful to humans

For example:

- Reduce customer response time by 40%

- Improve task completion accuracy

- Lower operational costs without hurting quality by implementing best practices.

When outcomes are clear, agentic AI systems can be designed to optimize for results, not random activity. This also makes it easier to test and improve the system over time.

Aligning AI Goals With Business Objectives

Agentic AI must work for the business, not against it.

If AI goals are unclear or misaligned:

- The agent may optimize the wrong metric

- Short-term gains may cause long-term harm

- Teams lose confidence in AI systems

To avoid this:

- Translate business goals into AI goals

- Define success metrics early

- Set boundaries for acceptable behavior

For example, an AI agent focused on speed must also respect:

- Quality standards are essential for developing enterprise AI systems.

- Compliance rules

- Customer satisfaction can be significantly improved by deploying production-ready generative AI solutions.

Alignment ensures the AI agent acts like a A reliable team member can significantly contribute to the success of impactful agentic AI systems.This is a proven method, not a risky experiment, when applying AI effectively, especially in the context of naresh reganti is an applied science.

A problem-first methodology turns agentic AI from a shiny idea into a real-world solution that delivers value, stays controlled, and earns trust.

Identifying Problems Worth Solving With Agentic AI

Agentic AI is powerful, but it is essential to integrate human-in-the-loop oversight in AI business applications. not the right tool for every problem. One of the smartest decisions you can make is choosing design patterns that enhance AI business strategies. where To use it—and where not to—requires knowledge of the AI experience. This step alone can save months of work and a lot of money by employing a structured approach.

High-Impact vs Low-Value Use Cases

High-impact problems share a few clear traits. They:

- Require a problem-first mindset to effectively address the business problem. multiple steps, not one action, but a structured approach to achieving goals.

- Change based on context or new information

- Involve decisions, not just calculations, especially when developing AI applications with a problem-first approach that includes system design.

- Happen often and consume human time, particularly when AI initiatives lack human-in-the-loop oversight.

Examples of high-impact agentic AI use cases:

- Customer support workflows

- Internal process automation

- Data monitoring and reporting

- Task coordination across tools can be enhanced by leveraging AI.

Low-value problems are usually:

- Simple and static

- Rare or one-time tasks

- Easy to solve with rules or scripts

Using agentic AI for low-value tasks leads to inefficiencies, which can be mitigated through a structured approach.

- Over-engineering

- Higher costs

- Unnecessary risk

The goal is not to use agentic AI everywhere. The goal is to use it in a structured approach to maximize effectiveness. where it creates real leverage.

Signals That a Problem Needs an Agentic Approach

A problem is a good fit for agentic AI if you notice these signals:

- Humans constantly decide what to do next

- Tasks require context and judgment

- The workflow spans multiple tools or systems

- Manual handling causes delays or errors, highlighting the need for an end-to-end agentic AI solution.

- The problem keeps repeating

For example, onboarding a new customer often requires emails, document checks, system updates, and follow-ups. This is hard to automate with simple rules, but perfect for an agentic AI system.

If the problem feels like “a smart person checking, deciding, and acting,” it may need an AI agent, not a script.

When NOT to Use Agentic AI

This is where many teams go wrong.

Do not Use agentic AI when you want to implement a comprehensive end-to-end agentic AI solution.

- A simple rule or automation works fine in the context of problem framing.

- The task is extremely sensitive or regulated

- Decisions must be 100% correct every time

- The cost of failure is very high in applications using a problem-first approach, especially when naresh reganti is an applied science.

Examples include building agentic applications that solve real-world problems:

- Medical diagnosis without human review

- Legal judgments without oversight

- Financial transactions without limits in the context of enterprise AI.

Agentic AI should assist, not replace, humans in high-risk areas.

Choosing not To use agentic AI is a sign of maturity, not weakness, demonstrating a problem framing approach. The best AI teams know that restraint leads to better results.

Decomposing Complex Problems Into Agentic Tasks

Big problems often fail AI projects because they are too large and unclear. Agentic AI works best when complex problems are broken into manageable parts, especially in multi-agent scenarios. small, clear, and manageable tasks. This step gives the AI direction and keeps it under control.

Task Breakdown and Goal Hierarchies

Every complex problem has many smaller parts.

Instead of giving an AI agent one huge goal, you create a A goal hierarchy is essential when designing agentic AI applications to ensure alignment with desired outcomes.:

- One main goal at the top is to develop solutions that leverage AI effectively.

- Smaller sub-goals underneath

- Simple tasks at the bottom

For example, the main goal might be “resolve customer issues faster.”

Sub-goals could include:

- Understand the issue

- Find relevant data

- Choose the best solution

- Communicate with the customer

Breaking problems this way helps the agent:

- Know what to focus on

- Move step by step

- Avoid confusion or looping

This makes the AI agent more reliable and predictable.

Decision Boundaries and Constraints

Agentic AI should not have unlimited freedom.

Decision boundaries tell the AI:

- What it is allowed to do in a structured approach.

- What it must never do

- When to ask for human help

Constraints may include:

- Budget limits

- Time limits

- Security rules

- Legal or ethical guidelines

Without boundaries, an AI agent may:

- Take unnecessary actions

- Cause unintended damage

- Drift away from the real goal

Clear constraints keep the agent focused and safe while still allowing autonomy.

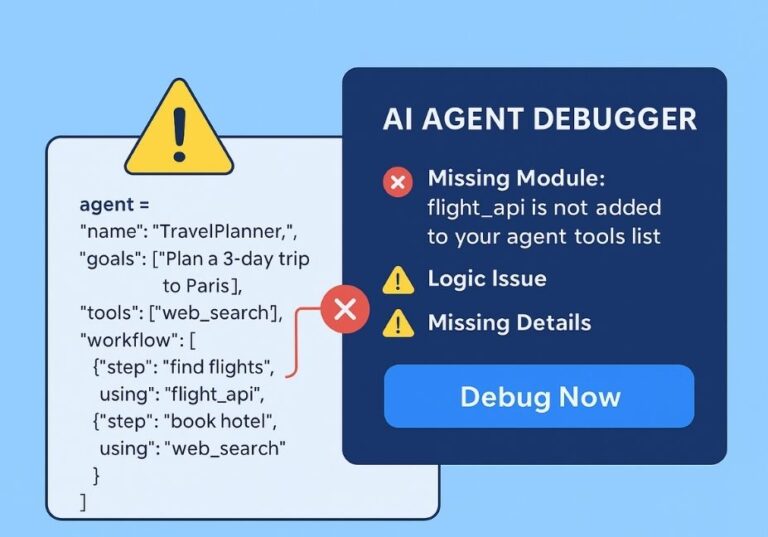

Failure States and Recovery Paths

No AI system is perfect. Failure will happen.

A strong agentic AI system is designed to deploy production-ready generative AI solutions effectively.

- Detect when something goes wrong

- Stop harmful actions

- Recover gracefully

Examples of failure states:

- Missing or incorrect data can severely impact the effectiveness of AI systems designed by Aishwarya Naresh Reganti.

- Tool errors or timeouts can disrupt the structured approach required for success.

- Conflicting instructions

Recovery paths might include:

- Retrying with a different method

- Asking a human for help

- Rolling back an action

Planning for failure is not pessimistic—it is professional. It ensures the AI system remains useful even when conditions are not ideal, particularly in deploying production-ready generative AI solutions.

By decomposing problems carefully, you give agentic AI a clear roadmap To follow a problem first approach makes it more effective, safer, and easier to trust.

Designing Agentic AI Solution Architecture

Once you understand the problem and break it into tasks, the next step is designing the architecture of your agentic AI. Architecture defines how the AI thinks, decides, and acts. A strong design ensures reliability, scalability, and real-world usefulness.

Core Components of an Agentic AI System

A well-designed agentic AI system usually has four core components:

1. Planner

The planner decides what the AI should do next. It breaks goals into smaller steps, prioritizes tasks, and maps out a path to reach the outcome using a structured approach. Think of it as the brain of the AI, guiding all actions in the context of building agentic AI solutions.

2. Executor

The executor carries out the tasks. It interacts with tools, APIs, databases, or even user interfaces. This is where decisions turn into action, making the AI a practical worker rather than just a thinker, especially when applying AI effectively.

3. Memory

Memory allows the agent to remember past actions, results, and user preferences. It avoids repeating mistakes and helps the AI improve over time. Memory is critical for multi-step tasks where context matters.

4. Feedback Loop

The feedback loop monitors results and outcomes are significantly improved when using a structured approach.. It checks if the actions are achieving the goal and informs the planner to adjust. This makes the AI adaptive and self-correcting, which is essential for complex, real-world problems.

These components together create a closed loop system, where the AI observes, plans, acts, and learns continuously.

Frameworks for Problem-Driven Agentic AI

Several frameworks make building agentic AI faster and more structured. Using a proven framework can save time and reduce risk when building agentic AI solutions. Examples include:

1. LangGraph

- Focuses on linking tasks, tools, and goals in a graph-based structure

- Helps agents plan multi-step actions efficiently

2. AutoGen

- Simplifies task orchestration and automation

- Integrates AI models with external tools and APIs to build AI solutions that solve real-world problems.

- Designed for developing and deploying production-ready generative applications. problem-first AI development

3. CrewAI

- Designed for collaborative agent systems

- Rich in entities and data connections (GEO-friendly)

- Lets multiple agents work together toward a common goal, facilitated by Aishwarya Naresh Reganti and Kiriti Badam.

Choosing the right framework depends on your problem complexity, required autonomy, and integration needs, as highlighted by Naresh Reganti and Kiriti Badam. A solid architecture ensures that your agentic AI is not only capable but also maintainable, scalable, and aligned with real-world business goals.

Human-in-the-Loop in Agentic AI Applications

Even the smartest agentic AI cannot replace human judgment entirely. Including humans in the loop ensures safety, reliability, and alignment with business goals. It balances the AI’s autonomy with oversight.

Why Full Autonomy Is Risky

Giving AI complete freedom may sound exciting, but it comes with real risks:

- Wrong decisions at scale can hinder the success of impactful agentic AI systems. – The AI may confidently take actions that are incorrect or harmful.

- Ethical or legal issues arise when AI is not developed following best practices. – Without oversight, agents can violate rules or regulations, especially in AI initiatives.

- Goal misalignment – The AI might optimize for the wrong outcome, especially in dynamic environments.

For example, a fully autonomous AI in finance could process transactions too aggressively, causing losses, or in healthcare, it could make recommendations that require expert validation. Human oversight mitigates these risks.

Approval, Review, and Escalation Layers

Human-in-the-loop (HITL) frameworks add structured checkpoints where humans can intervene:

- Approval Layer – Humans approve high-risk actions before execution.

- Review Layer – AI decisions made by Aishwarya Naresh Reganti and Kiriti are monitored post-action for accuracy and alignment.

- Escalation Layer – Complex or unexpected scenarios are escalated to human experts.

These layers allow the AI to work independently most of the time but ensures critical errors are prevented.

Balancing Speed With Control

The goal of HITL is not to slow the AI down unnecessarily, but to balance speed with safety:

- Routine, low-risk tasks can be fully automated

- High-impact or sensitive decisions involve human review

- Feedback from humans improves the AI’s performance over time, making it crucial for the development of agentic applications.

For instance, an AI agent managing customer requests can handle most queries automatically but escalate unusual complaints to a human agent. This approach ensures efficiency without sacrificing quality or trust.

Human-in-the-loop design is crucial for practical, real-world agentic AI, making systems smarter, safer, and more reliable.

Agentic AI Development Process: From Idea to Deployment

Building an agentic AI application is not a one-step task. It requires a solid foundation in data engineering to support impactful agentic AI systems. careful, iterative approach that moves from concept to deployment while ensuring the system stays reliable, safe, and aligned with goals.

Iterative Development for Agentic Systems

Agentic AI systems are complex, so you can’t just build once and forget. Iterative development means:

- Start small with a prototype or minimal viable agent

- Test and refine tasks, planning, and decision loops using enterprise AI systems.

- Gradually scale to handle more complex goals

This approach reduces risks and helps catch mistakes early. Each iteration improves the agent’s understanding, performance, and adaptability.

Testing Agent Behavior, Not Just Outputs

Traditional AI testing focuses on outputs: “Does the answer look correct?”

Agentic AI requires behavioral testing:

- Does the AI plan correctly?

- Does it choose the right tools or actions in a structured approach?

- Does it handle failures gracefully?

- Does it stay aligned with the main goal?

Testing behavior ensures the agent acts intelligently and safely, not just that it produces correct outputs in isolated cases.

Deployment Considerations

Deploying an agentic AI system is different from regular software:

- Monitor its real-world decisions continuously

- Ensure human-in-the-loop checkpoints remain active

- Manage scalability and resources to handle multiple agents

- Prepare for a course on maven to deepen your understanding of AI solutions around agentic AI. failure recovery and updates

Deployment is not the end—it’s the start of continuous learning and adaptation.

Measuring Success in Agentic AI Applications

Once deployed, you need to know whether the agentic AI is actually delivering value. Measuring success helps improve the system and justify investment in autonomous agents.

Defining Agentic AI Performance Metrics

Metrics should focus on both results and agent behavior. Examples: applications using a problem-first approach to tackle business problems.

- Task completion rate

- Time taken to reach goals

- Error rates in decision-making

- Human oversight interventions

Clear metrics prevent “busy but useless” agents and ensure measurable ROI in the context of using kiriti badam on maven.

Outcome-Based vs Accuracy-Based Evaluation

Traditional AI evaluation often focuses on accuracy—is the answer right or wrong when applying agentic solutions? Agentic AI requires outcome-based evaluation:

- Did the agent achieve the intended goal?

- Were decisions aligned with business objectives?

Accuracy matters, but success is defined by real-world results, not just correct outputs.

Continuous Learning and Feedback

Agentic AI systems improve over time through feedback loops:

- Learn from successes and failures

- Adjust planning and decision-making to improve the AI experience.

- Adapt to new data, tools, and environments

Continuous learning ensures the AI remains effective in enterprise use cases. Effective, relevant, and aligned strategies are crucial for building agentic AI applications, particularly when we develop and deploy production-ready generative solutions., even as problems or business needs change.

Practical Implementation Strategies

Once you have a clear problem and a solid architecture, the next step is practical implementation. This ensures your agentic AI delivers real-world value without wasting time, money, or effort.

Selecting the Right Agentic AI Use Cases

Choosing the right use case is crucial. Focus on problems that:

- Require multi-step decision-making

- Involve repetitive or time-consuming tasks

- Benefit from autonomy and adaptability

- Have measurable impact on business goals

Avoid using agentic AI for simple tasks or rare events. Start with high-value areas like:

- Customer service automation

- Internal workflow coordination

- Data monitoring and alert systems

The right selection ensures faster ROI and demonstrates the true power of agentic AI.

Building for Adaptability and Scale

Agentic AI systems must adapt to changing environments and grow as business needs evolve:

- Design modular task structures

- Use flexible frameworks that allow easy updates

- Ensure agents can collaborate or split work

- Plan infrastructure to handle increased load

Adaptability and scale prevent your system from becoming obsolete or brittle as tasks or data grow.

Avoiding Over-Engineering

Over-engineering is a common trap:

- Adding unnecessary complexity

- Creating too many tasks or sub-goals

- Integrating too many tools before proof of concept

Keep the solution simple, focused, and effective. Implement only what is required to solve the problem. Over-engineering slows deployment, increases cost, and can make agents harder to maintain.

Ethical, Security, and Practical Considerations

Agentic AI has power, but with power comes responsibility. Ignoring ethical, security, or practical concerns can lead to real risks and failures.

Ethical Challenges in Agentic AI

Agentic AI can make decisions independently, which creates ethical risks:

- Unintended consequences from autonomous actions

- Privacy violations if sensitive data is mishandled

- Lack of accountability for AI-driven decisions

Mitigation strategies include:

- Human-in-the-loop oversight

- Clear ethical guidelines

- Transparency in decision-making

Bias, Drift, and Misalignment Risks

Agentic AI systems can drift or develop biases over time:

- Biases from training data affecting outputs

- Goal misalignment with changing business priorities

- Unexpected behaviors when facing new scenarios

Regular monitoring, retraining, and alignment checks keep the AI safe, fair, and aligned.

Scalability, Maintenance, and Cost Control

Practical deployment requires planning for:

- Scalability – Can the system handle more tasks, users, or agents?

- Maintenance – Updating AI components, frameworks, and integrations

- Cost Control – Avoid over-engineering, inefficient infrastructure, or excessive compute costs

Ethics, risk management, and cost-awareness together ensure your agentic AI remains effective, responsible, and sustainable.

Case Studies: Problem-First Agentic AI in Action

Seeing real-world examples helps you understand how problem-first agentic AI actually works. These case studies show how breaking problems first, designing the right architecture, and using human-in-the-loop can deliver measurable results.

Customer Support Automation

Problem: Customer support teams spend hours answering repetitive questions, checking order statuses, and managing tickets.

Agentic AI Solution:

- The AI agent reads incoming customer emails or chat messages

- Identifies the issue and checks relevant databases

- Suggests or sends responses automatically

- Escalates complex or unusual cases to human agents

Impact:

- Response times reduced by up to 50%

- Agents focus on high-value interactions

- Customer satisfaction improves while operational costs drop

This shows how a problem-first approach ensures the agent solves the right problem efficiently.

Healthcare Operations and Administration

Problem: Administrative tasks in hospitals, like patient scheduling, insurance verification, and follow-ups, consume significant time.

Agentic AI Solution:

- The agent organizes patient appointments based on priority and availability

- Verifies insurance information and checks compliance rules

- Sends reminders and alerts to patients and staff

- Provides summarized reports to human supervisors

Impact:

- Staff can focus more on patient care

- Errors and missed appointments decrease

- Workflow efficiency increases significantly

Healthcare demonstrates that goal-driven AI with structured tasks improves both quality and efficiency.

Internal Business Process Optimization

Problem: Companies have complex internal processes—like procurement, invoice approvals, and resource allocation—that involve multiple teams and systems.

Agentic AI Solution:

- Agentic AI coordinates across tools, teams, and data sources

- Identifies bottlenecks and prioritizes tasks

- Automates routine decisions while escalating exceptions

- Tracks outcomes for continuous improvement

Impact:

- Reduced operational delays

- Improved cross-department collaboration

- Scalable, repeatable process improvements

Internal optimization shows that agentic AI can act as a digital coordinator, handling multi-step tasks that were previously slow and error-prone.

Conclusion: Building Smarter Agentic AI With a Problem-First Mindset

Building agentic AI applications is not just about using the latest tools or models—it’s about solving the right problems the right way. A problem-first approach ensures that your AI systems are purposeful, efficient, and aligned with real-world goals.

By clearly defining the problem, breaking it into manageable tasks, designing robust architecture, and including human oversight, you can create AI agents that deliver real value while minimizing risk. Measuring success based on outcomes, not just outputs, keeps the AI focused on results that matter.

Key Takeaways:

- Start with the problem, not the technology.

- Break complex challenges into tasks and goal hierarchies.

- Design agentic AI with planners, executors, memory, and feedback loops.

- Include human-in-the-loop oversight to balance autonomy and control.

- Focus on high-impact, measurable use cases to maximize value.

Final Recommendation:

Adopt a problem-first mindset in every AI project. Prioritize clarity, safety, and scalability. Start small, test iteratively, and expand once your agentic AI demonstrates measurable impact.

- Download our Problem-First Agentic AI Checklist to start your project the right way.

- Try a demo agent to see problem-first AI in action.

- Subscribe for updates to stay ahead in agentic AI development and best practices.

With this approach, you can turn agentic AI from a theoretical concept into a practical, high-impact solution that drives meaningful results for your business or organization.

Frequently Asked Questions (FAQs)

Here are answers to the most common questions about agentic AI and the problem-first approach, written for clarity and practical guidance (AEO-friendly).

What is the main difference between agentic AI and traditional AI automation?

Traditional AI automation is reactive. It follows fixed rules or scripts to complete tasks when triggered.

Agentic AI, on the other hand, is proactive and autonomous. It sets goals, plans actions, uses tools, and adapts its behavior to achieve desired outcomes without constant human instructions. Think of agentic AI as a digital worker rather than a simple responder.

How long does it take to build an agentic AI application?

The timeline depends on:

- Problem complexity

- Number of tasks and tools integrated

- Human-in-the-loop layers

- Iterative testing cycles

For simple applications, a few weeks may be enough for a prototype. Complex, multi-step enterprise solutions may take several months to design, test, and deploy. Starting small and iterating is key.

What are the biggest challenges in problem-first AI development?

The main challenges include:

- Defining the right problem – Avoid vague goals or misaligned objectives.

- Breaking tasks properly – Complex problems need clear hierarchies and boundaries.

- Maintaining alignment – Ensuring AI decisions match business priorities and ethics.

- Handling failures – Designing recovery paths for unexpected outcomes.

Overcoming these challenges requires careful planning, iterative testing, and structured oversight.

Do small teams need large budgets for agentic AI?

Not necessarily. Small teams can succeed by:

- Starting with high-impact, manageable use cases

- Using frameworks like LangGraph, AutoGen, or CrewAI

- Focusing on minimal viable agents before scaling

- Leveraging cloud tools and APIs instead of building everything from scratch

The key is smart problem-first design, not big budgets.

How do I keep agentic AI aligned with business goals?

Alignment comes from:

- Defining desired outcomes clearly

- Setting constraints and decision boundaries

- Regular monitoring and human-in-the-loop oversight

- Measuring success with outcome-based metrics, not just accuracy

Continuous feedback and iterative improvements ensure the agent stays on track with business priorities.

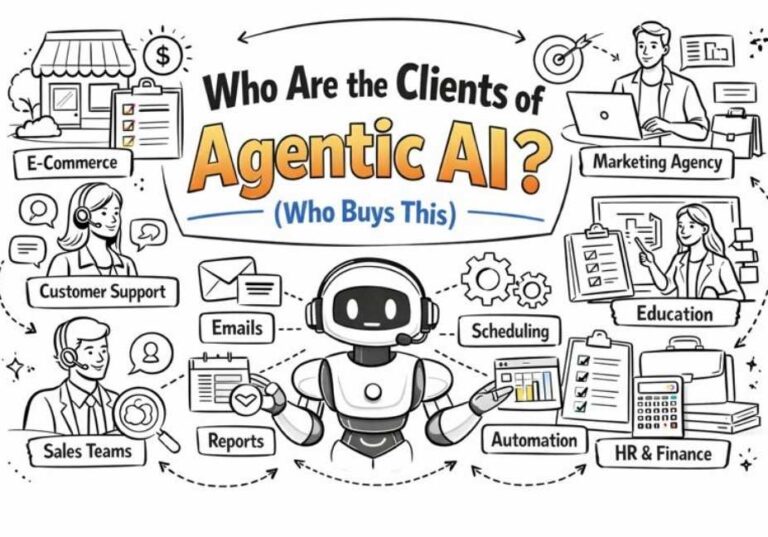

Which industries benefit most from agentic AI applications?

Agentic AI is particularly effective in industries where multi-step decision-making and automation of complex workflows add value:

- Customer service – automating tickets and queries

- Healthcare administration – scheduling, insurance verification, patient follow-ups

- Finance – fraud detection, transaction monitoring

- Business operations – workflow optimization, procurement, and resource allocation

- Software development – automating repetitive coding or testing tasks

Any industry with repetitive, multi-step, or data-intensive processes can gain efficiency and accuracy from agentic AI.

2 Comments