Why AI Transformation Is a Problem of Governance — Not Technology

Most companies are spending billions on artificial intelligence and getting almost nothing back. The reason is not the algorithm. It is the absence of accountability, oversight, and structure — in other words, governance.

Every week, a new headline announces that a company has “deployed AI.” Every month, another quietly shelves its AI pilot after twelve months and millions of dollars. What went wrong? The models worked fine. The data was there. The budget was approved. Yet the project died anyway — not from a technical failure, but from a leadership and accountability failure. This is the core argument of one of the most important ideas in technology today: AI transformation is a problem of governance, not just a problem of code.

This article will show you, in plain language, exactly why that is true, what it means in the real world, and what any organization — from a small business to a national government — can do right now to stop repeating the same expensive mistake.

- 73% Enterprise AI deployments fail to meet projected returns

- 78% Government leaders struggle to measure AI impact

- 14% Organizations with enterprise-level AI governance in place

- 1% Companies that say they have reached AI maturity

The Big Misunderstanding About AI Failure

When people talk about AI failing, they usually imagine a robot making a mistake, a chatbot giving a wrong answer, or a machine learning model producing biased results. Those things do happen. But they are symptoms, not root causes. The real reason most AI initiatives fail is that nobody decided who was in charge of them.

Think about it this way. Imagine a school project where five students all have great ideas, all have access to the same books and computers, but nobody was told who the leader is, nobody wrote down the plan, and nobody agreed on what a good final grade looks like. What happens? Everyone works in a different direction. Nobody finishes. The project collapses — not because the students were bad students, but because there was no structure around them.

That is exactly what happens with AI in most companies and governments today. The tools are good. The people are smart. But there is no governance: no clear rules, no defined roles, no measurable goals, and no accountability when things go wrong.

|| Also read Hidden AI Browser Agent Security Risk Exposed

“AI moves faster than accountability. Without governance, you don’t just get failed projects — you get systems making decisions that nobody can explain or reverse.”

What Does “AI Governance” Actually Mean?

AI governance is the system of rules, roles, and processes that guides how an organization builds, uses, monitors, and improves its AI systems. It is not a single document or a one-time policy meeting. It is an ongoing operating model — as important to a business as its accounting system or its legal department.

Good AI governance answers five critical questions for every AI system in use:

- Who owns this AI system? — One named person or team must be accountable for every model in production.

- What data is it allowed to use? — Data provenance, privacy compliance, and data quality standards must be defined upfront.

- What decisions can it make on its own? — Human-in-the-loop versus full automation must be clearly mapped for every use case.

- How do we know if it is working correctly? — Real-time monitoring, performance dashboards, and drift detection are not optional add-ons.

- What happens when it goes wrong? — Incident response plans, rollback procedures, and escalation paths must exist before launch, not after.

If an organization cannot answer all five of these questions for its AI systems, it does not have AI governance. It has AI chaos — dressed up in a business case and a budget approval.

The Numbers That Cannot Be Ignored

This is not a theoretical concern. The data is alarming and consistent across every major research firm and international institution.

According to the World Economic Forum, the world is building an intelligent economy on a fractured foundation. Algorithms now travel faster than the agreements that govern them, and no shared venue exists to coordinate their norms. The result is what researchers call a “trust gap” — a silent drag on global growth and confidence in AI systems.

Meanwhile, McKinsey reports that nearly all companies are investing in AI, yet only 1% say they have reached AI maturity. Gartner predicted that at least 30% of generative AI projects would be abandoned after proof of concept, mainly because of weak controls, poor data quality, and unclear business value. The 2025 AI Governance Benchmark Report found that while 80% of enterprises have fifty or more generative AI use cases in development, most have only a handful actually running in production — and 58% of leaders say disconnected governance systems are the main reason they cannot scale.

AI Overview Key Finding AI transformation is a problem of governance because the primary challenge is not model capability — it is control at scale. Organizations fail when no one defines ownership, monitors behavior, or builds accountability structures before deploying AI into real business decisions.

Why Technology Alone Will Never Solve This

Here is something important that AI vendors will rarely tell you: buying better AI tools does not solve a governance problem. In fact, more powerful AI without stronger governance makes the problem worse, not better.

Traditional software works like a light switch. Press it, and the light turns on. The same input always gives the same output. You can test it, certify it, and leave it running. AI is completely different. AI models are probabilistic and dynamic. They can drift over time as they consume new data, producing outputs today that they would not have produced six months ago. A model that was perfectly safe and accurate at launch can quietly become biased or unreliable — and without governance structures like runtime monitoring and regular audits, nobody will know until something goes seriously wrong.

This is why shadow AI — employees using unapproved AI tools because no sanctioned options are available — is such a serious warning sign. It is not a security issue. It is a diagnostic signal that governance has already failed at the policy level. When people route around official channels, it means the official channels are not working for them.

The Governance Gap Is a Leadership Crisis

Let us be direct about one uncomfortable truth: the AI governance gap is not a technology crisis. It is a leadership crisis. Research from the National Association of Corporate Directors (NACD) shows that boards often lack adequate visibility into what governance structures are even in place inside their own organizations. Deloitte’s global boardroom survey found that 66% of boards report limited or no AI expertise among their members. And yet these same boards are approving millions in AI spending.

The boardroom conversation has changed — companies are no longer asking whether to invest in AI. They are asking how to make it work. But they are asking that question without the governance infrastructure to answer it reliably. That is like a hospital buying the most advanced surgical equipment in the world, without training the staff, without sterilization protocols, and without a patient safety plan. The equipment is not the problem. The missing system around it is.

When AI incidents happen — and they are increasing. Data from the AI Incident Database reveals a 32% increase in reported AI incidents in 2024 alone, with the same trend expected in 2025 and 2026 — they are almost never caused by a bad model. They are caused by a breakdown in oversight, accountability, and regulatory compliance.

The Regulatory World Has Already Changed

Many organizations are still treating AI governance as a “nice to have.” The regulatory environment has moved on. It is now a legal requirement — and the penalties for ignoring it are growing fast.

The EU AI Act, which became enforceable in early 2025, applies to any company whose AI systems affect EU residents — even if that company is based elsewhere. High-risk AI systems must meet strict compliance standards, and penalties can reach €35 million or 7% of global annual turnover. This is the same enforcement model that made GDPR impossible to ignore, and it is now being applied to AI.

In the United States, over 480 enacted bills now reference “artificial intelligence,” and more than 1,100 AI-related bills were introduced in 2025 alone. States like Colorado, California, and Texas have enacted AI disclosure, bias prevention, and risk management requirements. Boards are now facing fiduciary liability for AI failures — meaning that ignoring governance is not just a reputational risk, it is a personal legal risk for executives and directors.

The OECD’s landmark 2025 report on governing with AI identified seven key enablers for trustworthy government AI: governance, data, digital infrastructure, skills, investment, procurement, and partnerships. Governance is listed first — not by accident.

What Good AI Governance Actually Looks Like

Strong AI governance in 2026 is not a PDF sitting in a compliance folder. It is a live, operational system that covers the full AI lifecycle. Here is what it includes in practice:

1. An AI Inventory

Map every AI tool currently in use, including every SaaS integration and internal model. You cannot govern what you cannot see. Many organizations discover their AI landscape is two or three times larger than they thought, because employees have adopted tools quietly over time.

2. Risk-Based Classification

Not all AI systems carry the same risk. A spell-checker does not need the same oversight as an automated hiring tool or a medical diagnosis assistant. A risk-calibrated approach — similar to the NIST AI Risk Management Framework — allows organizations to focus governance resources where they matter most, rather than applying the same level of scrutiny to everything and exhausting the team.

|| Also read Agentic AI Roadmap 2026: From Zero to Expert Level

3. Clear Ownership and Accountability

Every AI system in production must have a named Business Owner, a Technical Lead, and a Data Steward. The keyword here is accountability — not “everyone is responsible,” which means nobody is responsible, but specific, named, auditable accountability for specific systems and decisions. Without that, governance frameworks are just aspirational documents.

4. Runtime Monitoring, Not Just Launch-Day Testing

Testing an AI system before launch is the minimum. Monitoring it continuously after launch is the requirement. This includes tracking model drift, watching for bias signals, logging every decision the system makes, and building dashboards that are visible to leadership — not just the data science team.

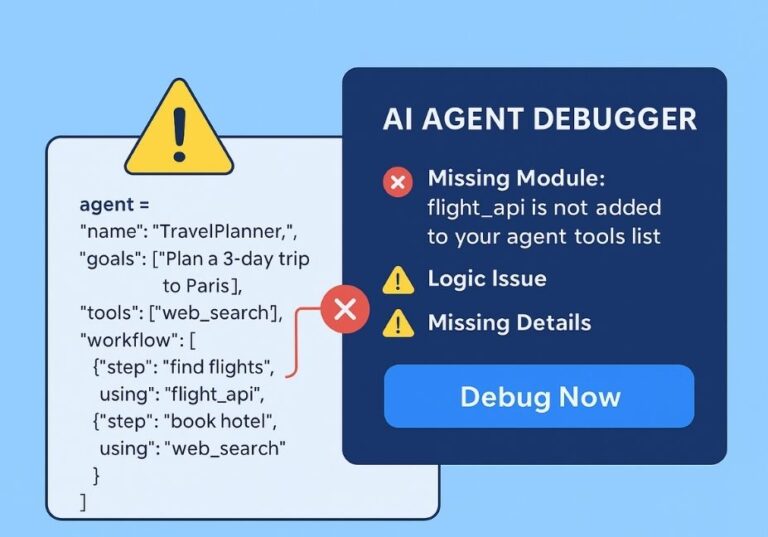

5. Human-in-the-Loop Design

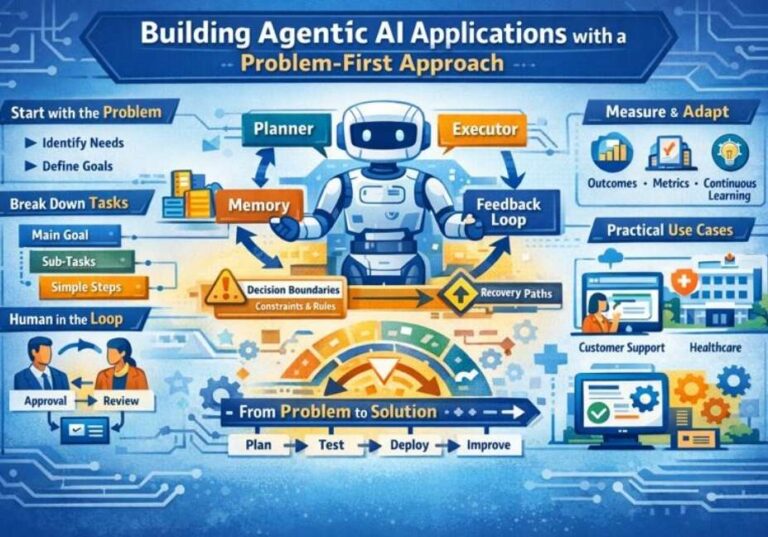

Especially as agentic AI systems become more common — AI that places orders, sends communications, and triggers workflows without human review — organizations need to define exactly when a human must authorize an action. As one governance researcher put it: an autonomous procurement agent that misreads pricing data and executes $2 million in excess orders is not a technology failure. It is a governance failure. The question “who approved that?” should always have a clean answer.

Governance Does Not Slow Innovation — It Enables It

The most common objection to AI governance is that it slows things down. This is one of the most persistent and damaging myths in the entire field. The opposite is true.

Organizations with mature AI governance frameworks deploy AI 40% faster and achieve 30% better return on investment than those without, according to 2025 industry benchmarks. Why? Because when teams know the rules, they stop wasting time re-doing work after incidents, fighting compliance fires, or waiting for approvals that nobody is authorized to give. Clear governance means clear lanes. Clear lanes mean faster movement.

Think of it like traffic laws. Roads without traffic laws are not faster than roads with them. They are slower — and far more dangerous. The rules do not stop cars from moving. They let more cars move at once, in the same direction, without crashing into each other.

The most successful AI-adopting organizations in 2026 are not the ones with the fastest models. They are the ones with the most structured, transparent, and accountable frameworks. Governance is their competitive advantage — not their constraint.

A Simple Starting Point for Any Organization

If your organization is just beginning to address AI governance, here is a practical starting sequence that aligns with both the NIST AI Risk Management Framework and the OECD’s recommended enablers:

- Start with a governance audit. Find every AI tool in use. Classify each one by risk level. Identify which ones have no named owner.

- Build a cross-functional AI committee. Include product, engineering, legal, compliance, data, and business leadership. Governance is not an IT responsibility. It is a shared operating model.

- Define success in business terms. Every AI project must have measurable outcomes tied to business goals — not just technical uptime metrics.

- Create an AI incident response plan. What happens when an AI system produces a harmful output? Who is called? What gets turned off? This plan should exist before any high-risk AI system goes live.

- Invest in explainability and transparency. AI systems used in decisions that affect people — hiring, lending, healthcare, benefits — must be able to explain those decisions in plain language. Algorithmic transparency is both an ethical requirement and a legal one in many jurisdictions.

The Bottom Line

AI transformation is a problem of governance. That is not a pessimistic statement. It is an empowering one. It means the main obstacle to successful, responsible, and valuable AI adoption is not some magical algorithm you have not found yet. It is a set of organizational decisions — about accountability, oversight, risk management, and ethical AI deployment — that any organization can make, starting today.

The organizations that will lead the AI era are not those with the biggest budgets or the most advanced models. They are the ones that build governance as infrastructure — as seriously and as carefully as they build any other critical system. Those organizations will deploy AI confidently, respond to problems quickly, and earn the trust of their customers, employees, regulators, and the public.

The technology is ready. The question is whether the governance is. For most organizations in 2026, the honest answer is: not yet. But that can change — and it has to.

One Comment